The art world has confronted forgeries for hundreds of years. To combat forgers, galleries & auction houses test the provenance of paintings.

Provenance is a combination of the identity & credibility of the actors involved in the painting’s lifecycle, as well the timing of key lifecycle moments.

From Wikipedia:

- the beginning of something’s existence; something’s origin.

- a record of ownership of a work of art or an antique, used as a guide to authenticity or quality.

If a painting’s provenance cannot be established, or is contradictory, then its credibility is questioned.

The same concept of provenance, with analogous tests, can be applied to combating the growing problem of deepfake videos.

What is a Deepfake

A deepfake video is a fraudulent copy of an authentic video or image, manipulated to create an erroneous interpretation of the events captured by the authentic image or video. More and more, Artificial Intelligence (AI) can be used to create very realistic videos portraying events that never actually occurred. Viewers of those deepfakes, lacking the ability to discern the forgery, can be fooled into thinking those contrived events did in fact occur, with subsequent misinterpretation.

Current deepfakes typically manifest as the superposition of one individual’s face onto some other person speaking – creating the illusion of the first person saying the words of the second.

The ‘deep’ in the name derives from the fact that creating such videos relies on ‘deep learning’. The software takes images of the first person and analyzes those images to develop a model of the source face. Once that model is learned, it can be mapped onto the face in the frames of the target video.

How to combat deepfakes

Following are potential mechanisms to identify a deepfake video. We draw analogies between the different mechanisms and how the art world combats forgeries.

Analysis of the video’s content

Just as AI is used to create Deepfakes, it can be used to spot them. Deepfake videos often give away their artificial origin. For instance, the generated faces may not blink as often as normal humans – once very 10 seconds. This just reflects the fact that the source images from which the AI learns generally don’t include examples with the subject blinking.

A detection AI can analyze a video, count blinks (and other similar metrics) to identify possible deepfakes. But of course, those creating the deepfakes are aware of this defense and are improving both the variety of source images and the AI itself.

An analogy in the art world might be if a painting purporting to have been painted by Michaelangelo was done In the style of Matisse or Rembrandt.

Verification of the video’s origin & integrity

If the creator of the original video digitally signs the original, and distributes that signature with the original, then downstream consensus nodes can be confident that the video they are viewing was indeed created by the creator and not subsequently modified.

While any attacker could modify the original to create a deepfake, and then subsequently create their own signature of that deepfake for distribution – the attacker’s public key would not be on a whitelist of ‘known & reputable’ creators (either maintained by the industry or personally) and so the deepfake could be spotted by the consensus nodes.

Digital signatures do not guard against the possibility of a corrupt or compromised video creator making a deepfake, signing it and then distributing. Consensus nodes would assume that the signature was created in good faith, and interpret the deepfake as credible.

By analogy, if a painting purported to have been painted by Michaelangelo had a signature in the lower right that did not match known instance’s of Michaelangelo’s true signature, then that would be evidence of a forgery.

Verification of the video timestamp

If a video is able to demonstrate that it was created in a window of time that is consistent with the known timing of the events captured within the video, then that temporal consistency is an indicator of its credibility. On the contrary, if a video is unable to demonstrate that consistency, or is explicitly inconsistent, then this can be interpreted as an indication of potential deepfake.

Similarly, if two versions of a given video have different timestamps, then we can assume that the later version is a copy of the earlier- and so a fake.

By analogy, if a painting purported to have been painted by Leonardo da Vinci were found to use a paint formula unavailable to artists of the time, then that is evidence of forgery. Art historians even look at the patterns of cracks in paint and compare to the patterns typical of paintings from different regions and times or the age of the wood (determined from analysis of the growth rings) of the frame to help date a painting. Accordingly, forgers may source wood from furniture they know is dateable to the year of the fake they are creating and use that for a frame.

How might a distributed ledger help?

As indicated above, temporal evidence is one means of spotting forged paintings.

A Distributed Ledger Technology (DLT) can play a role in establishing the provenance of a video and so may contribute to combating deepfakes by establishing an incontrovertible temporal provenance for authentic videos – and so provide a key test for spotting deepfakes.

DLTs assign transactions a place in a consensus order, such that all participants can apply those transactions to some shared state in that order, and thereby ensure that all copies of that state remain consistent.

A by-product of this functionality is the ability of DLT’s to enable a distributed timestamping service – in which transactions can be assigned a timestamp by the network. Clients wishing to obtain a timestamp for some piece of data submit a transaction carrying that data to the network that, after processing into consensus, assigns that transaction (and so the data) a consensus timestamp T.

This timestamp is logically an assertion by the network of the form ‘This data existed at time T’. It is manifestly not an assertion as to the truthfulness or veracity of the data. That is determined by some other layer.

Critically, a timestamp from a DLT is from the network as a whole, and not some single entity – as the network collaboratively established it. Consequently, there is no need for consensus nodes to trust a single timestamp server operator, but rather instead the collective network of DLT nodes (and of course the consensus algorithm, software etc).

DLT characteristics in context of deepfake use case

The specific capabilities of a public DLT, as largely determined by its consensus algorithm, will impact the security, latency, and throughput of its timestamping capabilities– and so its ability to mitigate deepfakes.

Accuracy

Some DLTs do not specifically assign a timestamp to a transaction, but rather assign transactions a place in a consensus order, from which some necessarily coarse assumptions about the timestamp of transactions within that order can be made, e.g. ‘The transaction is in block 1378 and the miner who created that block indicated a time of T’.

Other DLTs first assign a timestamp to transactions, and then base the order on those assigned timestamps. The latter type of DLT will generally be able to provide more accurate timestamps.

Latency

Latency measures how long it takes for a DLT to process a transaction into consensus such that parties can be confident the consensus timestamp, order, and application to state won’t be changed.

In the deepfake context, there is another latency to be considered – how long it takes for a deepfake to be created from a valid original. Deepfakes require that the source images be used to train

The longer the DLT latency, the greater the delay in the valid video obtaining a timestamp, and so the greater the opportunity for a deepfake copy of the original to be created and obtain an earlier timestamp – and so potentially establish itself as the valid version.

As AI improves, it has to be expected that this AI latency decreases. Consequently, the lower the consensus latency of a DLT, the greater its value in mitigating deepfakes.

Finality

Finality in consensus refers to the nature of the certainty of consensus, ie distinguishing between a given actor having a steadily growing sense of confidence that a transaction has received a consensus timestamp as compared to that same actor having a sudden & irrevocable ‘epiphany’ as to the consensus timestamp for a given transaction.

The latter finality of consensus simplifies the interpretation of a timestamp (and so the processing of a video) in the period of time directly following its creation and submission to the DLT. Consider if that video were submitted to a DLT that did not provide finality of consensus. If a consensus node were presented with a video in the period of time before it’s timestamp had been fully ‘locked in’, then it would necessarily need to decide if the current level of confidence were sufficient to accept the video as genuine.

In contrast, the timestamp of a DLT with finality requires no such subjectivity – once assigned to a transaction, it will not be changed.

Lack of finality is less of an issue if long periods of time have elapsed between the issuance of a timestamp and the need to process a video as the non-finality sort of consensus asymptotically approaches finality over long timespans. However, as AI improves, and deepfakes can be created quicker, the value of early processing (and so finality) will become more valuable.

Performance

Using a DLT to issue timestamps to videos implies that DLT being able to support both 1) the corresponding number of writes (e.g. ‘give this data a consensus timestamp’) and reads (e.g. ‘is there a consensus timestamp for this data?’).

As an indication of video volume, statistics indicate 5 hours of video are uploaded to YouTube every second. If an average video lasts 10 second, that implies 1800 video uploads per second- with consequent demand for DLT throughput.

Hedera mechanisms

Hedera is a public DLT that can support the timestamp use case, and so contribute to protection against deepfakes

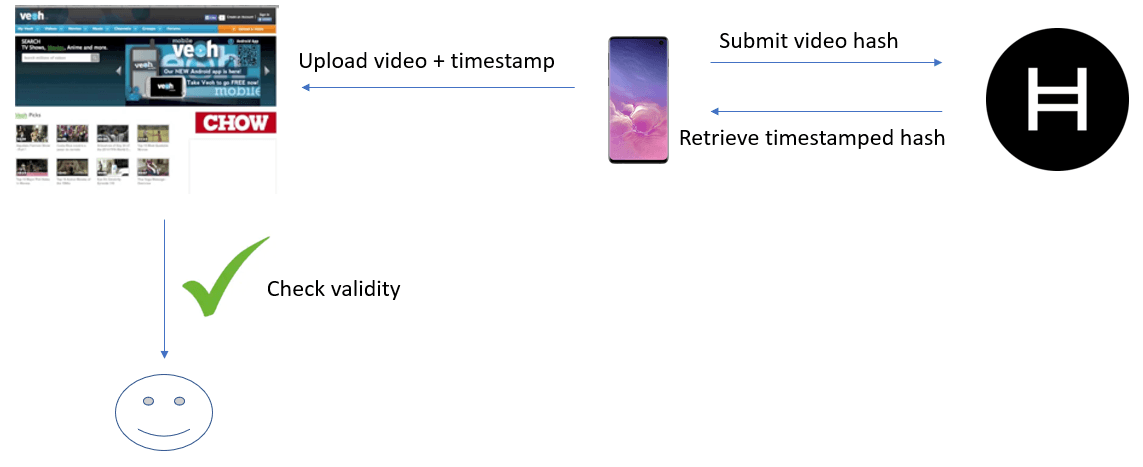

We present one possible model here:

- A video camera films some event

- Camera calculates hash of the video file and submits to the Hedera Consensus Service (HCS). The HCS enables a model where data can be timestamped by the Hedera network but not persisted in the consensus state of the mainnet , and so can enable the sort of high volume that a deep fake solution would imply.The hash of the video file is included within the transaction.The node will gossip that transaction out to the network. After a few seconds, the transaction will be processed into consensus and the transaction will be assigned a consensus timestamp

- The camera now queries a mirror network node for the record associated with the transaction, and stipulate that a StateProof also be returned

- The responding node returns the record (which contains the consensus timestamp & the hash of the video) & a state proof (which is a portable, cryptographically secure attestation from the network as a whole as to the validity of the record)

- Camera adds StateProof to the video metadata

- Camera uploads video with metadata to video sharing site

- Subsequent viewers of the video see a UI that denotes that:

- the timestamp of the transaction that submitted the video hash

- the timestamp was assigned by the Hedera network

- the hash within the Hedera transaction record matches that of the video

Consequently, viewers can be confident that the video existed at the time of the timestamp, and so necessarily was created before that time. With that known time frame for the creation of the video, comparisons can be made to:

- Other versions of the video that purport to be valid

- Known time of the events that the video purports to capture

Conclusion

There is an emerging arms race between AI creating deepfakes and the tools that will spot them.

None of the mechanisms described above are sufficient on their own to stop deepfakes. For instance, the lack of a signature on a video can only be interpreted as evidence of a forgery if all valid videos have a signature (which is unlikely in any near future). Similarly, while timestamps can complicate backdating a deepfake, ie an attacker fooling people into thinking a new video was created in the past, a timestamp on a video cannot determine when the video was filmed, only when it was timestamped.

As with so many threats, layers of security is the best approach to combating deepfakes. Secure trimestamps from public DLTs could be one part of the solution.

Attestiv is building on Hedera to combat deepfakes. To learn more, you can listen here to a Hedera Hashgraph Gossip about Gossip episode with Attestiv.